Most AI teams focus on the model. That makes sense. The model is the thing people see. It gives the output. It gets the praise. It gets the demo. But in many cases, the real edge is not just the model itself. It is how you train it. That training flow may be the part that makes your system faster, cheaper, more stable, more accurate, easier to update, or better for a narrow task. It may be the reason your results look strong when others using similar base models still struggle. And if that is where your real advantage lives, you need to know how to claim it the right way in a patent.

Finding the Real Invention Inside Your Training Process

The hardest part of claiming a model training method is not writing the patent language.

The hardest part is seeing your own work clearly. Many teams sit on valuable patent material and miss it because they look at the wrong layer of the system.

They look at the finished model, the benchmark score, or the product feature. But the patent-worthy value often lives deeper inside the training process itself.

It lives in the choices your team made when the normal path did not work well enough.

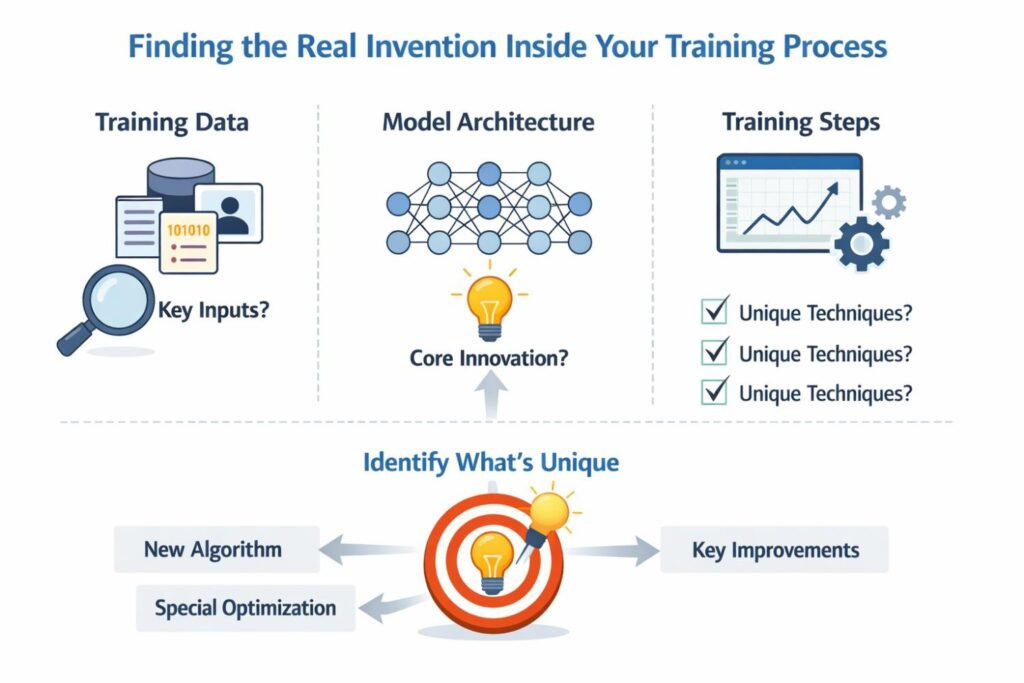

Why Most Teams Look in the Wrong Place

A useful starting point is to accept one simple fact. Your invention is often not the broad idea of training an AI model for a task. It is usually the narrower method that helped you get a better result in a way others would not naturally copy on their first try.

Businesses that understand this early make better filing choices and protect the right layer of their advantage.

Many founders make the mistake of describing their work in business terms only. They say they built a better fraud model, a more accurate medical prediction engine, or a smarter assistant for legal review.

That may help in a pitch deck, but it does not help much when you are trying to identify patent value. The better question is this: what had to be true during training for that result to happen?

That shift in thinking changes everything. It moves your attention away from the headline result and toward the repeatable method that produced it. That is where the real protection often begins.

The Invention Is Often Hidden in the Training Choices

Most valuable model training inventions are not loud. They are tucked inside the flow of decisions that your engineers made while trying to solve a hard problem.

The invention may be the order of steps. It may be the moment where the system switches from one kind of update to another. It may be the way low-quality data is handled instead of thrown away.

It may be the rule that decides which examples deserve more weight during retraining.

These details matter because they create the business outcome. A faster training cycle reduces cost. A more stable training process lowers failure risk. A cleaner way to adapt to new customer data improves retention.

A method that keeps performance strong while using less labeled data may open a market that was too expensive before. When you view training choices through that business lens, you start to see which technical details deserve protection.

Start With the Problem Before You Start With the Method

Before you define the invention, define the training problem your team faced. Every strong patent story becomes clearer when it begins with a real technical pain point.

That pain point gives shape to the method. It also makes the claim easier to support because it explains why the steps exist at all.

Maybe your model overfit on customer-specific data. Maybe it broke when fresh data arrived from a new environment. Maybe labels were too costly, too noisy, or too delayed.

Maybe compute cost made retraining impossible at the pace the business needed. Maybe your team had to train with privacy limits that blocked central data collection.

These are not side notes. They are the ground truth that helps you locate the invention.

A business should train itself to document those moments. When a technical team says, “we had to redesign the training flow because the normal way failed,” that is usually a signal worth capturing. Many strong patents start there.

Ask What Changed After the First Attempt Failed

The most revealing question in this process is simple: what changed after the first obvious approach failed? This question helps separate a routine training setup from a real invention.

Businesses should use it in internal invention reviews, technical audits, and IP capture meetings because it forces teams to name the actual change that mattered.

The answer is rarely “we trained a model.” The answer is more likely to sound like this: we changed how examples were selected based on uncertainty. We staged training in phases so the model learned structure before edge cases. We updated only part of the network when drift passed a threshold.

We generated synthetic samples, but only after matching them to observed failure patterns. We altered the reward signal so the model would stop gaming a shallow metric.

That is the kind of shift that turns routine work into protectable method design. It is also the kind of detail that competitors may try to copy once they see your product succeed.

Look for Repeated Decisions, Not One-Time Tweaks

A useful way to separate noise from patent value is to focus on repeated decisions inside your training system.

One-time cleanup steps or ad hoc fixes may be useful, but they often do not carry the same long-term value as a repeatable method.

Businesses should pay close attention to the rules, conditions, and control points that keep showing up every time the training system runs.

If your platform repeatedly routes new data through a filtering stage before fine-tuning, that is more interesting than a single manual cleanup event.

If your pipeline consistently scores training samples before choosing update intensity, that is more interesting than one engineer’s judgment call during an experiment.

Repeatability matters because patents protect methods, and methods become stronger when they reflect an ongoing system design rather than a lucky exception.

Where Businesses Often Find the Strongest Patent Value

For many AI companies, the strongest patent value is not in the model architecture itself. It is in the practical training method that makes the business work in the real world.

That may include how data is prepared, how labels are checked, how updates are scheduled, how system feedback enters training, or how customer-specific adaptation is handled without breaking the base model.

This matters for business strategy because competitors often can access similar model families, similar open tools, and similar public papers. What they do not have is your exact training logic.

If your edge comes from how you train under real constraints, that is usually worth more than a broad claim about the final output.

The training process may be the hardest thing for others to discover and the easiest thing for your business to understate by accident.

Your Secret Sauce May Be in Data Handling

Training data decisions are one of the richest places to find real inventions. Businesses often overlook this because they assume data work is too ordinary to patent. That is not always true.

What matters is not that you used data. What matters is how your system handles that data in a technical, structured, and useful way during training.

When Data Selection Becomes an Invention

A training process can become patent-worthy when the system does not treat all data points the same.

If your method chooses examples based on error type, signal confidence, source reliability, recency, customer segment, or downstream failure patterns, that may be part of the invention.

The key is whether that selection process changes the technical result in a meaningful way.

For a business, this can create a major moat. It means your training system improves from the right data at the right time rather than wasting resources on everything equally.

That can lower cost, shorten cycles, and improve accuracy where customers care most.

When Data Cleaning Is More Than Cleaning

Data cleaning sounds basic, but some forms of cleaning are really training control methods in disguise.

If your system detects weak labels, repairs them using cross-model agreement, or drops corrupted examples based on a rule tied to training stability, you may have more than housekeeping. You may have a technical process that deserves a claim.

Businesses should not dismiss these methods as back-office work. In many domains, data quality is the whole game.

The company that can train reliably from messy, limited, or changing data often wins. Protecting that process can matter more than protecting a model name.

Synthetic Data Can Be Claim Gold When It Is Used With Purpose

Synthetic data alone is not enough. But a specific method for generating, screening, mixing, or sequencing synthetic data during training can be highly valuable.

The inventive part may lie in how synthetic examples are matched to observed failure zones, how they are weighted against real data, or how the system uses them only under defined training conditions.

From a business angle, this can be powerful because synthetic data often unlocks scale where real data is rare, expensive, slow, or risky to collect.

If your training process turns that idea into a repeatable technical method, it should be reviewed as serious IP.

The Sequence of Training Steps Can Be the Core Asset

A lot of companies think invention means a new component. But sometimes the invention is the order in which familiar components are used. Sequence matters because training is not just a bag of parts.

It is a controlled process. The way your system moves from one stage to the next may be exactly what produces the gain.

Stage-Based Training Often Hides Valuable IP

If your team trains in phases, ask why. Maybe the first phase builds general structure, the second focuses on hard cases, and the third adapts to customer drift.

Maybe your system freezes certain parameters early, then opens them later when confidence improves. Maybe it uses one objective first and another objective later to reduce instability.

Those stage decisions may be highly claimable because they define the method, not just the ingredients.

For businesses, staged training often creates more predictable performance and lower cost. That makes it commercially meaningful as well as technically specific.

Switching Logic Is More Valuable Than It Looks

One of the best places to find patent value is the logic that decides when training should change course.

That switch may happen based on loss trends, confidence scores, data drift, user feedback, hardware limits, or performance on a narrow slice of examples. The switch itself can be the invention when it controls how the system learns.

This is strategic because switching logic is often invisible from the outside. A competitor may see that your product performs well, but they may not know that the real reason is your internal rule for when the training pipeline moves from one mode to another.

That kind of hidden method can be highly defensible if captured early and described well.

Focus on the Feedback Loop, Not Just the Training Pass

Many modern AI systems do not train once and stop. They learn through a loop. They collect signals, score outcomes, pull in new examples, and retrain or adjust in response.

When businesses think about patent strategy, they should not isolate the training pass from the larger feedback system around it. The loop may be where the invention lives.

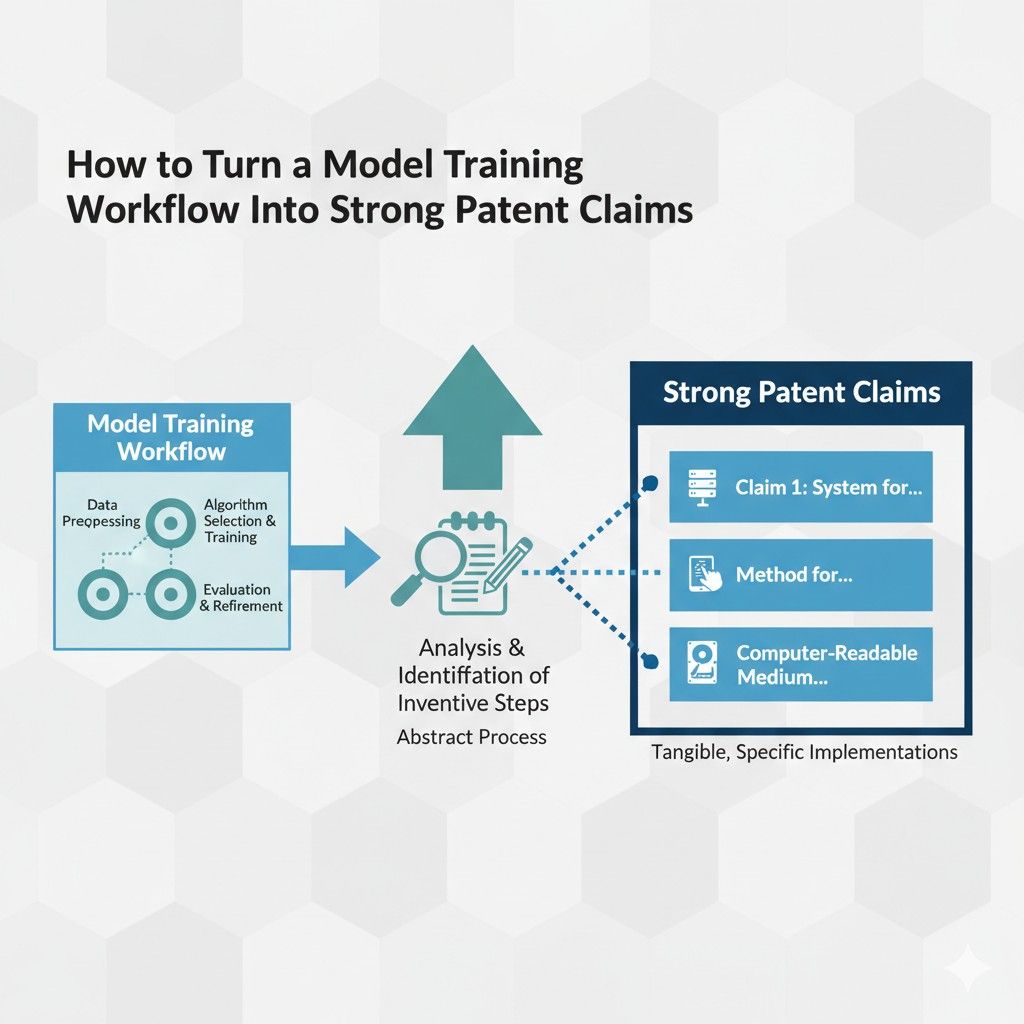

How to Turn a Model Training Workflow Into Strong Patent Claims

A smart model training workflow does not become a strong patent claim on its own. There is a gap between good engineering and good protection. That gap is where many businesses lose value.

They build something real, something useful, and sometimes something hard to copy, but they describe it in a way that is too loose, too narrow, or too tied to one version of the code.

The goal is not just to explain what your team built. The goal is to turn that work into claim language that covers the real business advantage.

Start With the Method, Not the Hype

The first move is to strip away the marketing layer. A patent claim cannot rest on phrases like smarter training, better tuning, or improved AI performance.

Those phrases may sound strong in a pitch, but they do not give your invention enough shape. A claim needs a real method. It needs steps, conditions, inputs, changes, and results that connect in a clear way.

That is why businesses should begin with the raw workflow itself. Look at what happens before training starts, during the update process, and after each round of model adjustment.

Then ask what part of that flow actually creates the improvement. In many cases, the answer is not the full pipeline. It is a narrower core inside the pipeline. That core is what the patent claim should revolve around.

A Patent Claim Is Not a Technical Summary

Many teams make the mistake of treating a patent claim like a cleaned-up engineering note. That usually leads to weak protection. A technical summary explains what the system does.

A claim defines what others are not allowed to do without stepping into your territory. That is a very different job.

This is why businesses need to think in terms of boundaries. When you write a claim, you are not only telling the story of your workflow. You are drawing a line around the parts that matter most.

A strong claim captures the key steps broadly enough to stop easy copycats, but clearly enough to stand on real technical ground. That balance is where the real work is.

Find the Smallest Set of Steps That Creates the Advantage

A common mistake is putting too many details into the main claim too early. That can make the claim fragile.

If a competitor can skip one narrow detail and still get the same result, your protection becomes easier to avoid. The better move is to identify the smallest set of steps that still creates the advantage you care about.

Focus on the Cause, Not Every Implementation Detail

Your model training workflow may include dozens of engineering choices. Not all of them belong in the main claim.

Some are useful, but not central. Others are central because they are the reason the workflow works better than ordinary training. The task is to separate cause from decoration.

For example, a business may have built a workflow where noisy samples are scored, low-confidence examples are handled differently, and model updates are routed through separate paths based on error type.

The important question is not whether one script used a certain library or one stage ran on a certain server. The important question is which steps caused the better result. That is the level where strong claims begin.

Keep the Core Claim Clean

A strong core claim should feel tight. It should describe the key method without drowning in optional details.

Those extra details still matter, but they often belong in supporting claims, not the first one. Businesses that understand this protect more value because they do not confuse the center of the invention with one narrow build of it.

This also helps when your product changes. A clean core claim has a better chance of staying useful as your stack evolves. That matters a lot in AI, where model families, tools, and training settings can shift quickly.

Turn Workflow Steps Into Legal Building Blocks

A training workflow is often described in engineering language. Patent claims need something more structured.

They need each step to work like a block in a chain. One block sets up the next. Each one has a role. Together they create a method that can be tested, understood, and enforced.

Translate Actions Into Method Steps

This translation process is highly practical.

Businesses should look at their workflow and restate it as a sequence of acts. Instead of saying the system improves training quality, say that the system receives a set of training samples, scores the samples according to a defined signal, selects a subset based on that score, updates one or more model parameters using the subset, and triggers a later training stage based on a measured condition.

That structure creates something much closer to a claimable method.

The reason this matters is simple. Patent claims need action. They need a method that unfolds. The clearer the flow, the easier it becomes to protect.

Tie Each Step to a Real Function

Every step in a claim should earn its place. If a step does not help define the invention, narrow the boundary, or support the technical result, it may not belong in the main claim.

This is where many businesses can improve their patent strategy. They often include steps because the code contains them, not because the invention needs them.

The better habit is to ask what role each step plays. Does it define the smart data selection logic. Does it control when retraining happens. Does it limit updates to a subset of parameters.

Does it use feedback in a special way. If the step helps explain why the method works, it is doing real claim work.

Claim the Logic That Makes the Workflow Different

The strongest part of a model training patent is often not the existence of a training stage. It is the decision logic inside that stage.

That logic may decide which data enters training, when the objective changes, how the update path is selected, or how the system reacts to drift or feedback. This is where many businesses have their real edge.

Decision Rules Often Matter More Than Model Names

Founders sometimes focus too much on whether the workflow uses a transformer, diffusion model, graph model, or some other category. That may matter in some cases, but it is often not the heart of the invention.

The stronger patent story often lies in the rule that controls how training is carried out.

A claim built around a smart rule can be more durable than a claim tied to one model type. That is valuable for businesses because AI tools move fast. A workflow that depends on a specific model name may age quickly.

A workflow that depends on a useful training rule can remain relevant even as the underlying models change.

Capture Conditional Behavior

Many training workflows become valuable because they are not fixed. They adapt. They shift behavior when certain conditions appear.

They may switch from one data pool to another, retrain only when drift crosses a threshold, or change the weighting of examples when user corrections reach a certain pattern. This conditional behavior is often highly claimable.

Businesses should pay close attention to these triggers. A conditional rule is often where the technical intelligence of the workflow lives.

It also helps distinguish your process from a generic training method. That difference can be critical when shaping strong claims.

Protect the Business Benefit Without Writing a Business Claim

Every business wants patent claims that protect what really drives value. But claims cannot simply state the business result. They must protect the method that produces that result.

This means your claim should not say that the workflow improves efficiency or reduces cost in vague terms. Instead, it should recite the steps that make that improvement happen.

Link the Technical Steps to Commercial Impact

This is one of the most strategic parts of patent drafting. The business should know exactly which technical method drives the market advantage.

Maybe the workflow lowers retraining cost enough to serve mid-market customers. Maybe it improves adaptation speed enough to win enterprise accounts with changing data. Maybe it reduces bad outputs in a regulated setting.

Once that link is clear, the patent team can focus on the technical cause rather than the business slogan. That makes the claim stronger and more useful. It also helps leadership decide which inventions deserve filing priority.

Think About Copy Risk

A strong claim is not only about what your team built. It is also about what a competitor would try to copy once your product starts working in the market.

The most valuable claim often covers the training move that others would need if they wanted similar results under similar constraints.

This is why businesses should ask a practical question during claim development: if another company wanted to catch up fast, what exact part of our workflow would they be tempted to imitate.

The answer often points to the method that deserves the broadest and most careful claim coverage.

Use Layers of Claims, Not One Shot

No single claim should try to carry the whole protection strategy alone. Strong patent drafting usually uses layers. The first claim covers the core method.

The next claims add narrower versions, useful conditions, preferred signals, alternate data sources, fallback paths, or specific parameter control methods. This layered structure gives the patent more resilience.

The Main Claim Should Cover the Heart of the Workflow

The broadest claim should focus on the part of the workflow that makes the invention what it is. It should not try to describe every preferred version. Its job is to protect the center.

That center may be a sequence of data scoring, subset selection, conditional retraining, and selective parameter update. Or it may be some other chain. The point is that the main claim should guard the real concept.

This helps businesses because it creates room around the product, not just around one release version. It gives a better chance of covering future versions that still use the same core logic.

Supporting Claims Should Add Strategic Depth

Supporting claims are where you can add detail without shrinking the main boundary too early.

These claims may cover the use of confidence scores, the use of user feedback as a training signal, the freezing of selected layers, the use of synthetic samples in a defined ratio, or the triggering of retraining after a drift score crosses a threshold.

For businesses, this is extremely useful. It creates multiple points of protection. Even if one claim faces pressure later, others may still hold real value. It also helps capture the full richness of your workflow without overloading the main claim.

Avoid Claims That Read Like Research Papers

One of the biggest traps in AI patent work is writing claims that sound like academic writing. Research writing often focuses on novelty in a broad sense. Patent claims need a more exact boundary.

They should not read like theory. They should read like a method that a system performs.

Do Not Hide the Invention Behind Abstract Language

Phrases like adaptive optimization framework, dynamic intelligence layer, or context-aware learning engine may sound polished, but they often do more harm than good.

They can make the claim vague and hard to defend. Businesses should resist this style, especially when the real invention is much more concrete.

A better approach is plain language tied to real system actions. That does not mean the claim becomes casual. It means it becomes precise. Precision is what gives a patent weight.

Be Careful With Results-Only Language

Another common problem is claiming only the result. Saying that the system trains a model to improve output quality or reduce error does not say enough about how it gets there.

Strong claims need method steps that produce the result. The result can support the story, but it should not replace the structure.

This matters because competitors often reach similar goals through different means. If your claim only names the goal, it may either become too weak or run into trouble. The stronger move is to claim the path.

Make Room for Variations From Day One

A business should never assume the first version of its workflow is the only version that will matter. AI systems evolve quickly. New training data may arrive.

New customer types may push different needs. Better infrastructure may open new implementation paths. Good patent strategy plans for that from the start.

Write for the Family of Methods, Not Just One Build

When shaping claims, think about the family of methods that share the same core idea. If your current workflow scores samples using one metric, ask whether other scoring methods could still express the same invention.

If your current system triggers retraining based on one threshold, ask whether other trigger types would still fit the same concept.

This mindset matters for businesses because it helps the patent age well. You are not just protecting today’s workflow. You are protecting the broader method your company is likely to keep using as it improves the product.

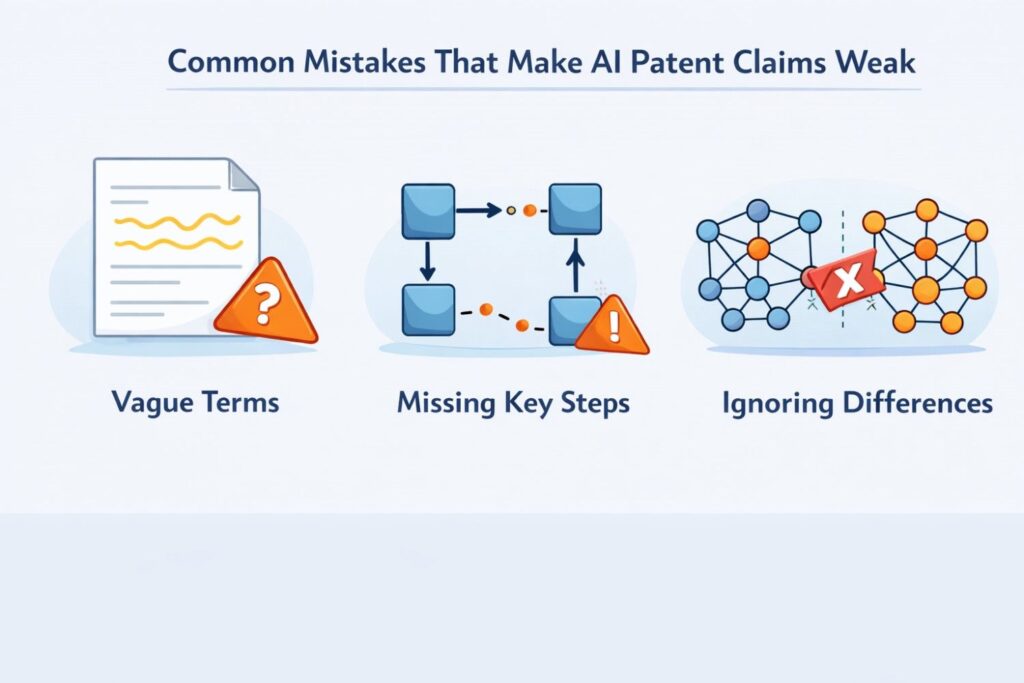

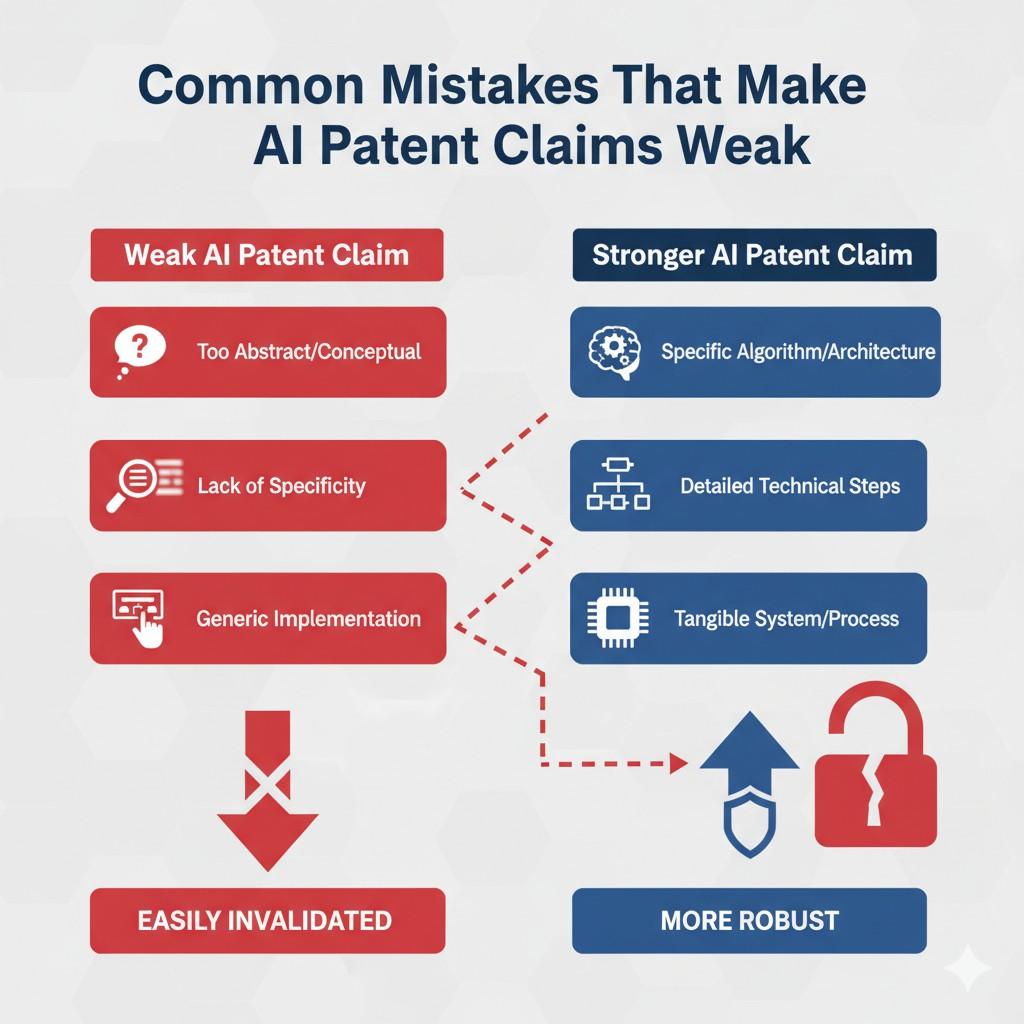

Common Mistakes That Make AI Patent Claims Weak

Strong AI patent claims do not fail only because the invention is weak. Many fail because the invention is described the wrong way. That is a big difference.

A company may build something smart, useful, and hard to copy, yet still end up with claims that do not hold much value.

This usually happens when the filing process focuses too much on surface language and not enough on the real method. For businesses, this is not a small drafting issue.

It is a protection issue. Weak claims can create a false sense of safety while leaving the core advantage exposed.

Writing the Claim Around the Buzzword Instead of the Method

One of the most common problems is that teams describe the invention using popular AI terms instead of real process steps. This happens all the time because AI companies live in a world full of fast-moving language.

Teams talk about fine-tuning, multi-modal learning, agents, adaptive systems, reinforcement loops, and self-improving models. Those labels may sound modern, but they do not protect much on their own.

Why Trendy Terms Do Not Create Strong Coverage

A buzzword is not a boundary. It does not explain what the system actually does. If a claim says the system uses machine learning to improve performance, that says almost nothing about the training method.

A competitor can read that and still have no clear limit on what is covered. In many cases, broad buzzword-heavy language makes the claim easier to challenge because it lacks concrete structure.

What Businesses Should Do Instead

A business should force itself to go one level deeper than the label. Instead of saying the invention uses adaptive training, describe what adapts, when it adapts, what signal causes the change, and what training action follows.

That shift turns marketing language into claimable method language. It also helps the company protect the real edge rather than the phrase attached to it.

Claiming the Goal Instead of the Path

Another major mistake is claiming the outcome while skipping the method that leads there. Many AI teams naturally speak in results because results are what matter in product reviews, investor updates, and customer calls.

They say the model becomes more accurate, safer, faster, or more efficient. Those outcomes matter, but they are not enough to support a strong patent claim.

Results Alone Leave the Door Open

If the claim focuses only on what the system achieves, it often leaves too much room for others to reach a similar result through a different route.

That weakens the claim because the real protection should cover the process that creates the advantage. A patent must capture the steps that make the result happen, not just the result itself.

The Better Framing for Founders and Teams

A useful business habit is to ask one simple question every time a team member describes a result. Ask how. Ask what exact training action caused the improvement.

Ask what changed in the data flow, update rule, trigger logic, feedback use, or parameter control. The answer to that question is often where the strong claim begins.

Describing the Workflow Too Broadly

Broad claims can sound powerful, but broad and vague are not the same thing.

Many AI patent claims become weak because they are drafted at such a high level that they no longer describe a real technical method. This often happens when teams try to cover too much territory in one shot.

High-Level Language Can Hide the Real Invention

A company may say that it trains a model using selected data and feedback to improve predictions.

That sounds broad, but it does not say enough.

It does not explain how the data is selected, what kind of feedback is used, how the feedback changes the training flow, or why this process differs from ordinary practice. Without that shape, the claim can lose force.

Why Business Value Suffers From Vague Drafting

Weakly defined claims do not just create legal risk. They also reduce business leverage. A weak claim is harder to enforce, harder to explain in diligence, and easier for competitors to move around.

The company may think it owns a broad area when in truth it owns very little. That mismatch can hurt fundraising, partnerships, and long-term defense.

Describing the Workflow Too Narrowly

The opposite mistake is also common. Some AI claims are drafted so tightly around one exact implementation that they become easy to avoid.

This happens when the drafting process copies too much directly from the current code or experiment setup without stepping back to identify the broader method.

Narrow Claims Break When the Product Evolves

AI systems change fast. Teams swap models, adjust thresholds, change data sources, add feedback loops, and revise update logic as the product matures.

If a claim is locked to one exact loss function, one exact architecture, one exact data format, or one exact training stack, its value can shrink quickly. It may cover only a short-lived version of the product.

How Businesses Can Avoid This Trap

A company should separate the core method from the current implementation details. The filing can still describe the exact build, but it should also explain the wider family of methods that express the same inventive idea.

This gives the patent room to stay relevant as the product grows. For businesses, that flexibility matters because the patent should support the road ahead, not just the codebase of one quarter.

Treating the Model as the Whole Invention

Many teams assume the value sits mainly in the model itself. That belief can make patent claims weak because it pulls attention away from the training process, control logic, and system behavior that often matter more.

In a lot of real-world AI products, the model is only one layer of the advantage.

The Hidden Value Often Sits Outside the Model

A business may use a widely known model family, yet still outperform competitors because of better sample selection, better retraining triggers, better feedback loops, better safety shaping, or better domain adaptation.

If the claim focuses only on the model and ignores those training methods, it may miss the strongest protectable value in the system.

Strategic IP Requires a Wider Lens

Businesses should look at the full training and update pipeline, not just the final model artifact.

That means asking what happens before a weight update, what governs the update, what happens after the update, and how the system decides what to do next.

This wider view often reveals the real invention more clearly than the model description alone.

Forgetting to Capture the Reason the New Method Was Needed

A strong patent story often becomes clearer when it starts with the technical problem that forced the team to change course.

One reason claims become weak is that the filing jumps straight into the method without explaining what the old approach could not do. That missing context can make the inventive step look smaller than it really is.

The Problem Gives Meaning to the Method

If the team faced unstable training, noisy labels, high compute cost, weak adaptation, customer drift, or delayed feedback, that problem helps explain why the new method matters.

It also helps show that the method was not obvious. Without that framing, the claim may read like just another training variation rather than a targeted solution to a real technical obstacle.

Businesses Should Preserve This Context Early

The best time to capture this is while the memory is fresh. Once the product moves ahead, teams often remember the final workflow but forget the failed first path and the turning point that made the new method necessary.

That lost detail can weaken the filing. Businesses should record the before-and-after story while the engineers can still explain it clearly.

Using Research Language Instead of Product Reality

AI companies often borrow writing habits from research papers. That is understandable, but it can create weak patent claims.

Research writing is often built to explain novelty, compare benchmarks, and discuss theory. Patent claims need something different. They need a practical method boundary.

Academic Framing Can Blur Commercially Useful Protection

A research-style description may focus on experimental setup, benchmark performance, or conceptual novelty without making the core business-use method clear.

That can leave out the exact training controls that matter most in a real product setting. A company may then end up protecting the least useful part of the invention and overlooking the part that drives real commercial value.

Product-Driven Claiming Is More Useful for Business

A better approach is to ask what part of the method matters in real deployment. What part lowers cost. What part keeps quality stable. What part makes customer adaptation possible.

What part reduces failure in a live environment. Those practical details often lead to stronger claims because they reflect the system as it actually creates value.

Failing to Claim Conditional Logic

One of the richest parts of many AI systems is the logic that decides when training behavior changes.

Weak claims often ignore that logic. They describe a static training process even when the real value comes from switching rules, thresholds, triggers, and branching behavior.

Static Language Misses Dynamic Intelligence

A system may retrain only when drift passes a threshold. It may reweight examples only when certain failures cluster. It may freeze some parameters while updating others under a defined condition.

If the claim ignores this conditional logic, it may miss the part of the workflow that truly sets the invention apart.

Why Conditional Rules Matter for Strategy

For businesses, these rules are often highly defensible because they sit deep inside the product and connect directly to performance, cost, or reliability. They can also be hard for competitors to guess from the outside.

That makes them valuable patent material. A claim that omits them may leave a major part of the moat unprotected.

Wrapping It Up

Claiming model training methods in patents is not about dressing up your workflow with fancy language. It is about seeing clearly what your team actually built, what changed when the normal path failed, and what part of that training process gives your business a real edge.