AI can write fast. That is easy.

The hard part is getting it to say something real.

That is where many founders, engineers, and startup teams get stuck. They use AI to draft an invention description, a patent summary, or a technical write-up, and the result sounds polished. It reads smoothly. It looks complete. But when you slow down and really read it, something is missing.

The description says what the system does, but not how. It talks about benefits, but not mechanism. It uses smart words, but it does not carry the weight of real technical detail.

That gap matters a lot.

If you want strong patent protection, stronger technical writing, and better documents for review, filing, and strategy, you need to know how to take AI-written text and make it technically real.

That is what this article is about.

And if you want a faster way to do that with software built for startups and real attorney oversight, PowerPatent can help you turn rough technical work into stronger patent applications without the usual delays and confusion. You can see how it works here: https://powerpatent.com/how-it-works

AI gives you a shell, not always the substance

Let us start with the truth.

AI is very good at language.

It is not automatically good at your invention.

That single point explains most of the frustration people feel when they use AI for patent or technical drafting.

The model can create neat paragraphs. It can mirror the tone of a formal document. It can produce clear sentences and organized sections. But unless it is guided well, and unless it is fed the right source detail, it often creates a shell.

The shell looks right.

It may say things like the system receives data, processes the data, generates an output, and improves efficiency or accuracy. It may mention modules, engines, workflows, or models. It may sound advanced.

But if you ask simple follow-up questions, the weakness shows up fast.

What data exactly?

What processing step?

What decision rule?

What order?

What signal triggers the next action?

What is optional?

What is required?

What happens if the input is missing?

What changes when the confidence is low?

What part is new?

That is where shallow AI writing starts to break.

So the first mindset shift is this: AI-written descriptions are often a starting point, not a final asset.

They can save time. They can help structure thinking. They can help turn notes into sentences. But if you care about protecting an invention, explaining a technical system, or building real support for patent drafting, you need to go beyond the shell.

The real problem is not bad writing. It is missing mechanism

A lot of people think the issue is style.

They see an AI-written description and say it feels generic, robotic, vague, or too polished. Those things may be true, but they are not the core problem.

The core problem is usually missing mechanism.

Technical detail lives in mechanism.

Mechanism is the actual inner logic of what happens.

It is the path from input to output.

It is the set of steps, structures, relationships, signals, decisions, and conditions that make the invention work.

If the mechanism is thin, the description is thin, even if the writing is smooth.

This is why some AI-generated text can seem strong at first glance and still be weak where it counts. It talks around the invention instead of through it.

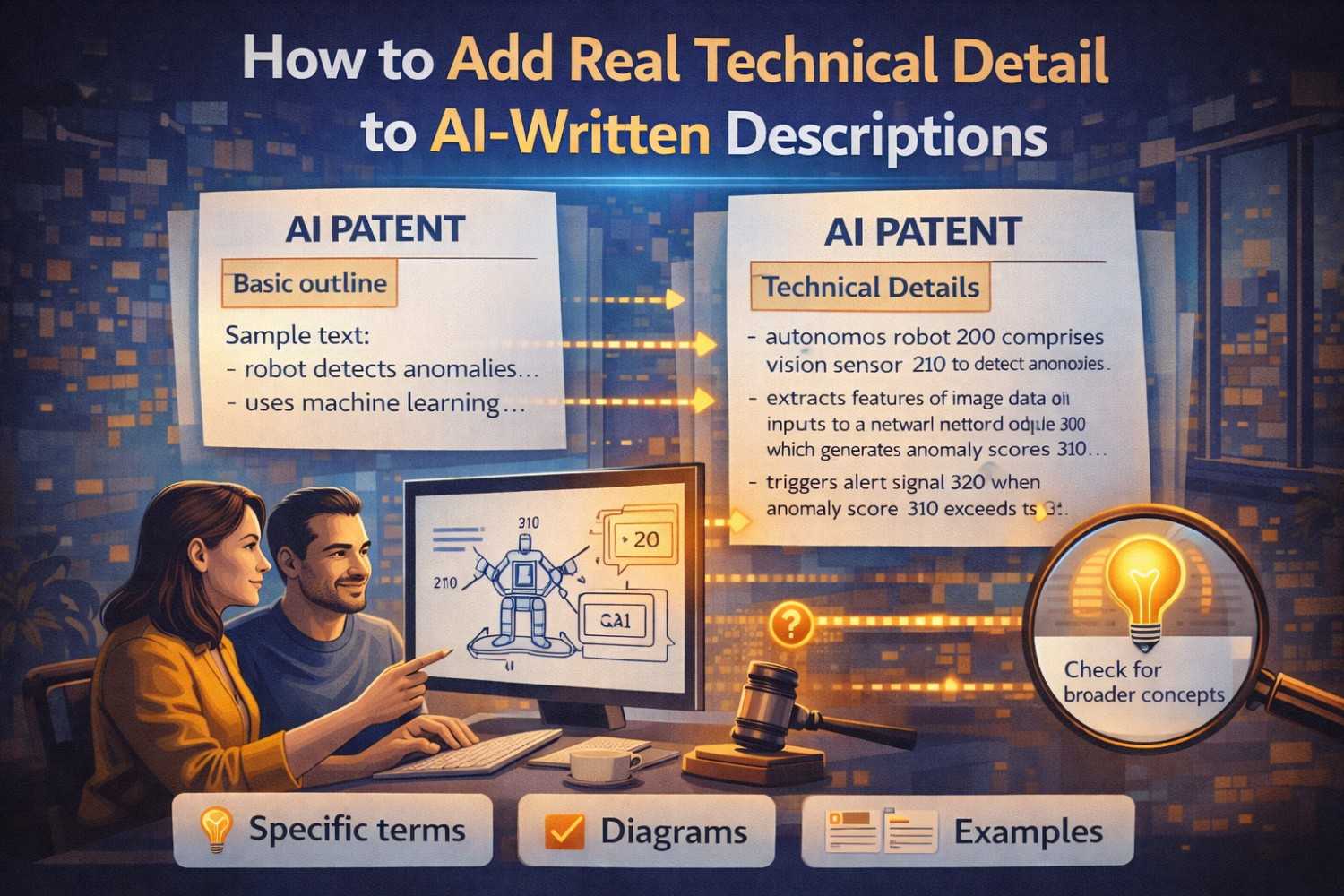

For example, an AI-written line may say that a system “uses machine learning to optimize routing decisions in real time.”

That sounds fine until you ask what the system actually does.

Does it receive live traffic data, historical route data, vehicle capacity, and customer time windows?

Does it score route options using a weighted model?

Does it reject options that violate policy rules before ranking the rest?

Does it re-run the selection when threshold events occur?

Does it compare live performance against expected delivery windows before changing a route?

Now we are getting somewhere.

That difference is the difference between broad language and real technical detail.

Real technical detail is what makes a description useful later

This matters for more than writing quality.

A description with real technical detail is more useful across the whole business.

It helps patent counsel understand what was actually invented.

It helps founders explain what is defensible.

It helps engineers review whether the write-up matches reality.

It helps future drafts get built faster.

It helps investors and acquirers see that the company’s IP is tied to actual technical work, not surface claims.

And most of all, it helps support patent filings that need more than high-level outcomes.

This is one reason so many startup teams struggle with old-school patent processes. The first draft may come back formal and long, but still not reflect the technical core well enough.

PowerPatent was built to fix that gap by helping startups move from raw technical work to stronger patent-ready descriptions with real attorney review layered on top. If that sounds useful, start here: https://powerpatent.com/how-it-works

Why AI-written descriptions drift toward generic language

To fix the problem, you need to understand why it happens.

AI tends to drift toward generic language for a simple reason. Generic language is the safest guess when specific detail is missing.

If you give AI only a short invention summary, it has to fill the gaps somehow. It cannot invent reliable technical truth on its own. So it produces broad patterns that sound plausible across many inventions.

That is why you see the same kinds of phrases over and over.

The system receives input.

The system analyzes data.

The system generates an output.

The model improves performance.

The process increases efficiency.

The platform adapts dynamically.

None of those lines are always wrong. They are just not enough on their own.

The same thing happens when the prompt is too broad. If you ask AI to “write a patent description for my AI platform,” the output will often reflect whatever common structure fits many AI platforms.

That is not because AI is broken. It is because the prompt did not force the detail that makes your invention yours.

So if you want real technical detail, you need to create the conditions for it.

That means better source material, better questions, better prompting, better review, and better editing.

The first step is to stop asking AI to impress you

This sounds small, but it matters.

Many people judge AI output by whether it sounds smart.

That is a weak test.

You should judge it by whether it teaches a technical reader something real.

A description is not strong because it sounds formal.

It is strong because it communicates technical truth clearly enough that someone can understand the system, the process, the structure, and the inventive point.

So when you read an AI-written paragraph, do not ask, “Does this sound professional?”

Ask, “What did I actually learn from this?”

Could you draw the system from the paragraph?

Could you map the steps?

Could you tell what inputs matter?

Could you explain the difference between this invention and the old approach?

Could you spot what is optional and what is core?

If the answer is no, the paragraph needs more work.

This one habit can change how your whole team uses AI for drafting.

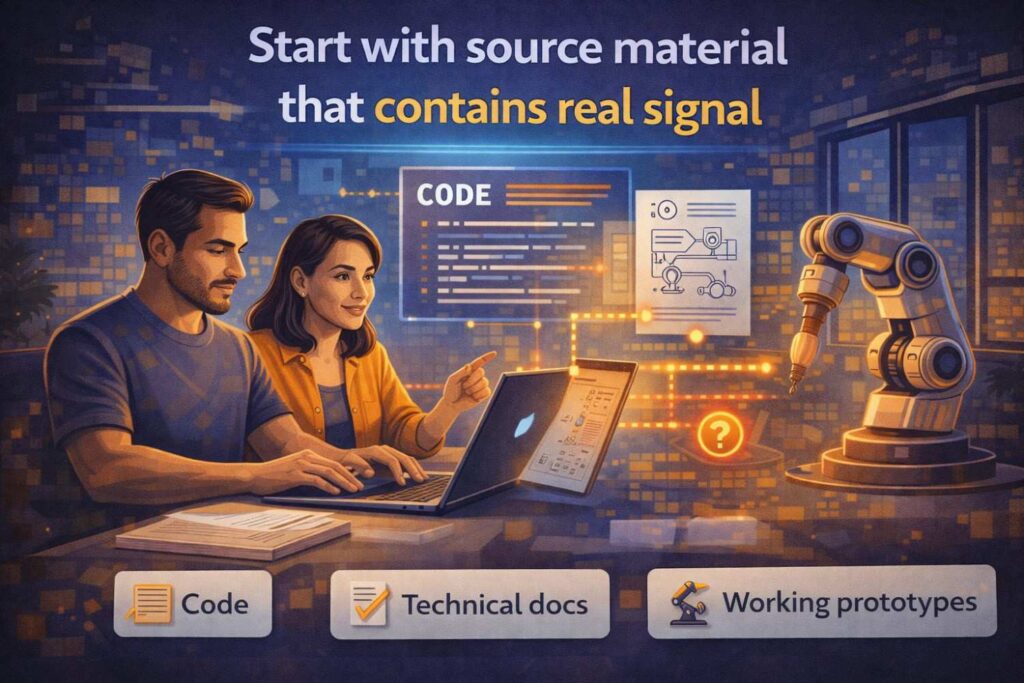

Start with source material that contains real signal

If the input is thin, the output will often be thin.

This is one of the most important truths in AI-assisted writing.

To add real technical detail, you need raw material that actually contains that detail.

That can come from design docs, code comments, architecture notes, system diagrams, sprint planning docs, engineering chats, whiteboard photos, inventor interviews, internal demos, and test notes.

The source does not have to be perfect. It just needs to contain the truth of how the invention works.

A lot of founders make the mistake of giving AI only the invention’s headline.

They provide a title and a short summary. Then they hope the model will expand it into something rich.

That almost always leads to soft detail.

The richer approach is to feed the model the facts that make the system real.

What are the components?

What is the order of operations?

What inputs come in?

What logic is applied?

What decisions are made?

What outputs are produced?

What thresholds matter?

What conditions change the flow?

What alternate paths exist?

What parts can vary?

What failure states are handled?

That is the kind of source material AI needs if you want better technical writing.

Pull detail out of inventors before you polish the prose

This is where many teams work in the wrong order.

They start polishing language before they have extracted the full invention.

That is backward.

You need to pull out the technical detail first. Then you shape it into clear prose.

If you skip that first part, you end up polishing a weak foundation.

The best way to surface real detail is often through focused inventor interviews.

Ask the inventors to explain the system like they are walking a new engineer through it.

Ask what changed in the system because of the invention.

Ask what the old method did instead.

Ask what exact problem kept breaking before this solution was built.

Ask what must happen first.

Ask what happens next.

Ask which decision point matters most.

Ask what a competitor would copy first.

Ask what part looks simple from the outside but was hard to get right.

Ask where the system behaves differently when conditions are uncertain or messy.

These questions pull out the kind of detail that AI cannot invent on its own.

Once you have those answers, AI becomes much more useful. Now it is working with substance.

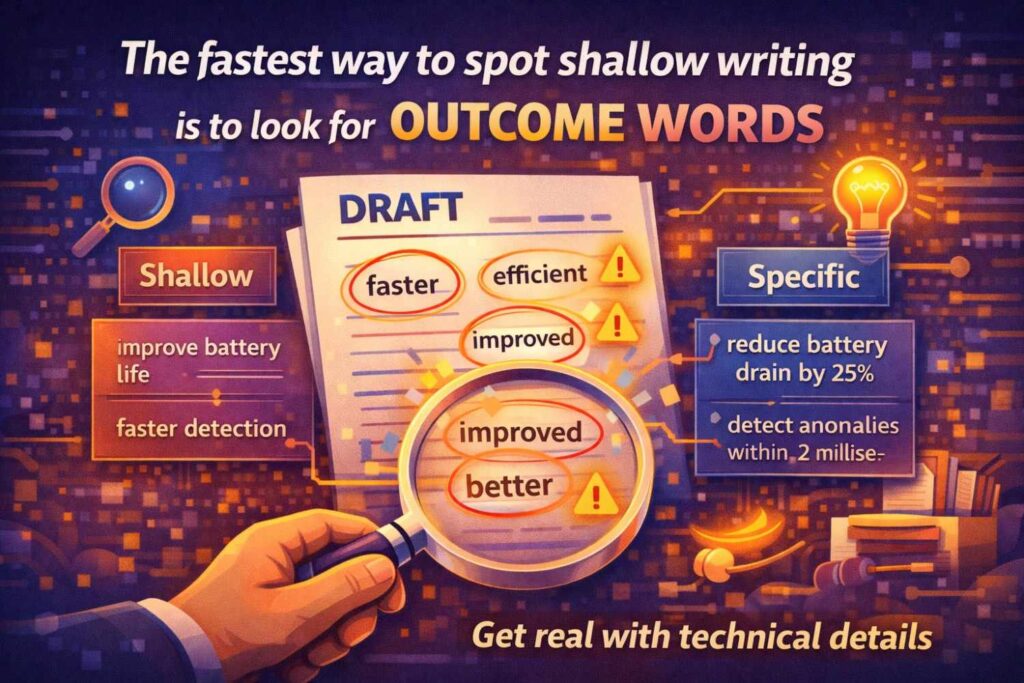

The fastest way to spot shallow writing is to look for outcome words

Outcome words are words that describe the result without explaining the method.

Words like improves, optimizes, adapts, enhances, manages, selects, recommends, determines, and processes often work this way.

Again, these words are not the enemy. They just need support.

If a paragraph leans heavily on outcome words, stop and ask what is happening underneath them.

If the text says the system “determines a recommended action,” ask how.

Does it compute a score?

Does it compare signals?

Does it rank outputs?

Does it apply rules and thresholds?

Does it use a decision tree or another model?

Does it pull data from a memory store first?

Does it update based on live feedback?

That follow-up is where technical detail begins.

A very useful editing move is to underline every outcome word in an AI-written draft and then force the paragraph to explain what sits beneath each one.

You will often find that one vague sentence becomes three much stronger ones once the mechanism is added.

One simple test: can you draw it?

Here is one of the best ways to judge whether an AI-written description has real technical detail.

Try to draw it.

If you cannot sketch the system, flow, or method from the text, the writing is probably still too high level.

A good technical description usually gives enough signal that a reader could draw the main architecture or process steps with reasonable confidence.

They may not get every minor implementation choice, but they should be able to identify the main parts and how they relate.

For a system, that may mean they can draw the components and the data flow between them.

For a method, that may mean they can draw the sequence of steps and the key decision points.

For a device, that may mean they can draw the main physical elements and their relationships.

If the text does not let you do that, it likely needs more structure and more specificity.

This is one of the reasons figures and written descriptions work so well together in patent drafting. Each helps expose what the other is missing.

AI descriptions often miss the middle

This is a subtle but common problem.

AI tends to describe the start and the finish, but not the middle.

It will tell you the system receives input.

Then it tells you the system produces output.

But the middle, where the invention really lives, gets compressed into one vague phrase.

That middle may include filtering, normalization, feature extraction, ranking, threshold checks, policy application, comparison against stored data, model inference, validation, conflict resolution, or fallback handling.

That middle is where the value often sits.

This is why one of the best ways to improve AI-written descriptions is to force expansion of the middle.

Ask, “What happens between receiving the data and generating the output?”

Ask, “What transformations occur before the decision?”

Ask, “What checks are applied before the recommendation is issued?”

Ask, “What conditions change the path?”

Once you expand the middle, the whole description gets stronger.

Describe the invention at more than one level

A strong technical description usually works at multiple levels at once.

It explains the invention broadly enough to show the general concept.

It also explains it specifically enough to show the mechanism.

If you only have the broad layer, the writing feels empty.

If you only have the narrow layer, the writing can get trapped in one implementation.

So when editing AI-written descriptions, aim for both.

At the higher level, explain what the system or method is doing in a general technical sense.

At the lower level, explain how one representative implementation works.

For example, the broad layer may explain that the invention dynamically allocates compute resources based on predicted task urgency and current system load.

The lower layer may explain that the system receives task metadata, assigns urgency values using a scoring model, filters available resources by compatibility constraints, ranks the remaining resources by projected completion time and cost, and assigns the task to the highest-ranked resource that meets a threshold policy.

Now the description has both scope and support.

That combination is much more useful than either layer alone.

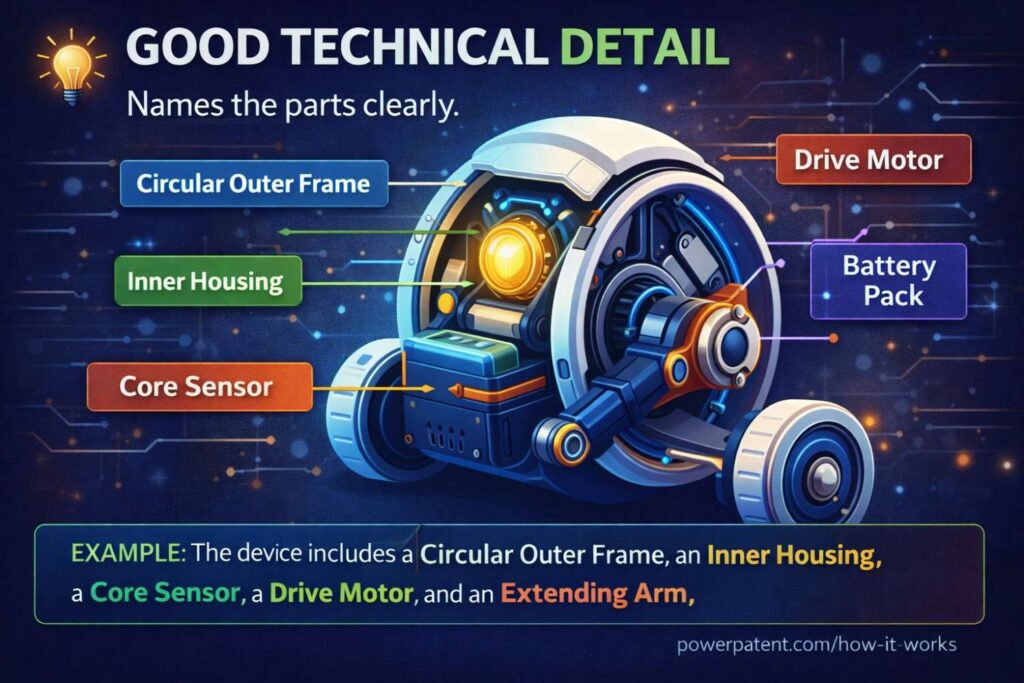

Good technical detail names the parts clearly

One reason AI-written descriptions feel slippery is that they often fail to name the important pieces in a stable way.

They say the platform, the engine, the module, the processor, the component, the logic, the layer, the model, and the service, but those labels keep shifting.

This makes the description harder to follow.

Real technical detail often gets clearer when you choose names for the main elements and use them consistently.

That does not mean the names need to be fancy. In fact, simple names are often better.

Signal processor.

Routing module.

Confidence engine.

Validation service.

Policy layer.

Output generator.

Resource allocator.

Once you name the important parts, you can explain what each part does and how they interact.

This turns a floating paragraph into a system.

And systems are easier to understand, review, and protect.

Replace product language with system language

Founders often describe inventions through the lens of the product.

That is natural. It is how they talk every day.

But AI will often mirror that product language unless you push it toward system language.

Product language focuses on what the user sees and feels.

System language focuses on what the technology does under the hood.

For example, product language may say that the app helps users find the best supplier faster.

System language may say that the system receives supplier metadata, user preference data, and live availability signals, applies a weighted evaluation process to candidate suppliers, filters candidates based on policy constraints, and outputs a ranked set of supplier options.

The second version is much more useful for technical writing and patent drafting.

It does not throw away the business benefit. It just roots the explanation in mechanism.

This shift matters a lot if you want AI-written text to become technically meaningful.

Do not let “AI” become the detail

This is a huge issue in modern startup writing.

Teams say the invention uses AI, and they stop there.

That is not real detail.

“AI” is a category label. It is not the mechanism by itself.

If your description says the system uses AI to classify, predict, recommend, detect, optimize, generate, or adapt, you still need to explain what the larger pipeline is doing.

What inputs reach the model?

How are they prepared?

What happens before the model step?

What happens after it?

How is confidence handled?

How are outputs validated?

What triggers re-processing?

How does the result affect system behavior?

What fallback path exists if the model output is weak or uncertain?

A strong AI-related description does not hide behind the phrase “uses AI.” It explains where intelligence sits in the process and how it interacts with the rest of the system.

That is where the real technical story lives.

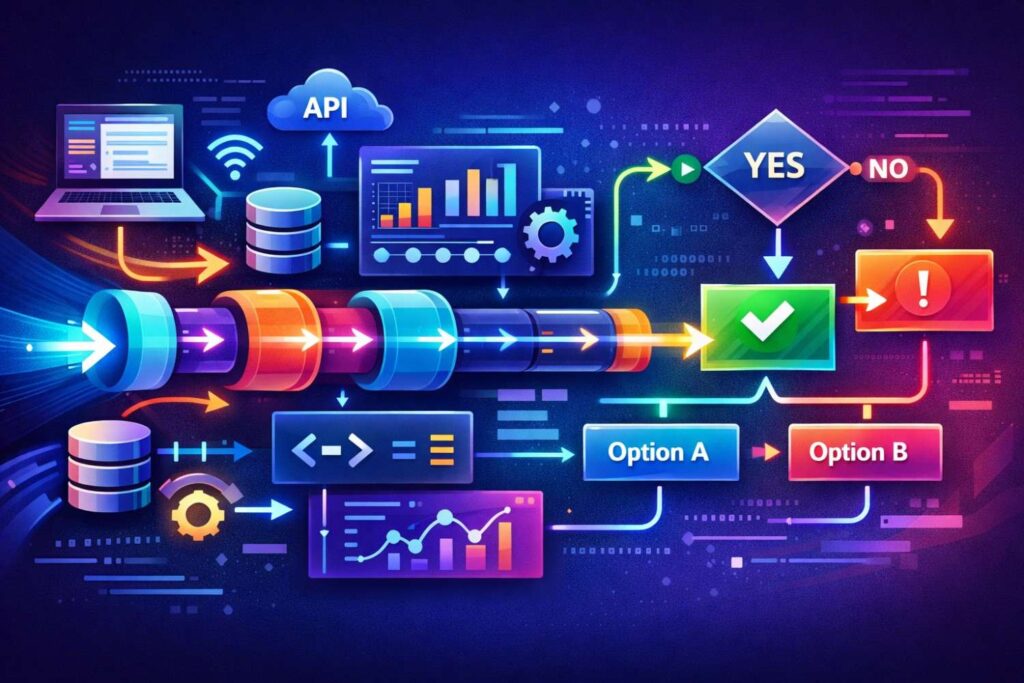

For software, talk through data flow and decision flow

Software inventions become clearer when described through flow.

Data flow shows what moves through the system.

Decision flow shows what logic changes the path.

AI-written descriptions often mention one or the other, but not both.

If you want stronger technical detail, build both into the draft.

What data enters first?

Where is it stored, filtered, transformed, or enriched?

What module receives it next?

What condition causes the system to branch?

What score or flag is generated?

What output is selected?

What event triggers another pass?

What happens if the result falls below a threshold?

This kind of writing helps transform a vague software paragraph into a useful technical description.

For hardware, structure and relationship matter

Hardware descriptions need a different kind of detail.

AI often writes hardware descriptions like a shopping list.

It names a sensor, a controller, a housing, a connector, and an actuator, but it does not explain their relationship, placement, signal flow, operating sequence, or physical effect.

That is why hardware descriptions need editing for structure and interaction.

How are the parts arranged?

What is connected to what?

What signal moves between them?

What movement or force results?

What timing matters?

What dimensions, materials, or placement choices affect operation?

What alternate physical configurations are possible?

The detail in hardware writing is not just about naming components. It is about explaining the way the structure behaves.

One of the best editing moves is to expand each verb into a step

AI often compresses a complex sequence into one verb.

It says the system filters, ranks, validates, scores, allocates, encrypts, synchronizes, compares, or identifies.

Each of those verbs may actually hide several smaller steps.

If you want stronger technical detail, unpack them.

For example, “ranks” may actually mean the system assigns a score to each candidate based on multiple weighted factors, removes candidates that fail a threshold rule, sorts the remaining candidates by composite score, and selects the highest-scoring candidate.

That is far better than the single verb alone.

A practical way to edit AI-written text is to take every major verb and ask, “What does that action consist of?”

Then turn the answer into one or more clear sentences.

This is one of the fastest ways to deepen a description without changing the invention itself.

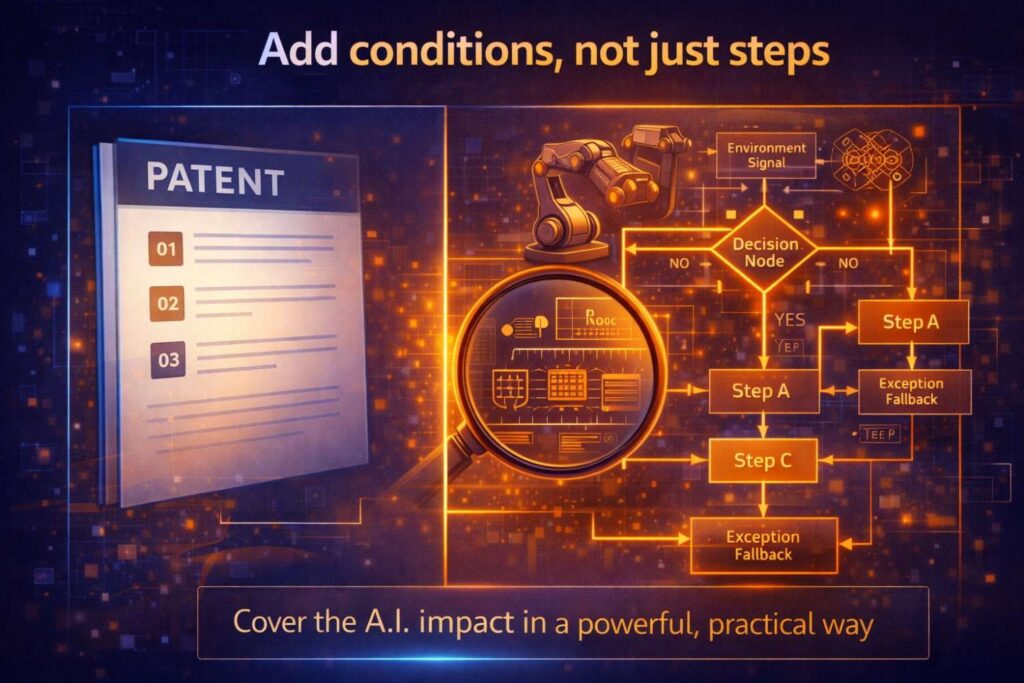

Add conditions, not just steps

A lot of AI-written descriptions improve once you add not just the main steps, but the conditions that shape those steps.

Conditions make the system feel real.

They answer questions like when a step occurs, why one path is chosen over another, and what changes the system’s behavior.

For example, instead of saying the system generates a recommendation and sends it to a user, explain under what condition the recommendation is sent.

Is it sent only when confidence exceeds a threshold?

Is it sent after a secondary check?

Is it delayed if conflict exists between two signals?

Is it routed to human review if uncertainty remains high?

These conditional elements often carry real inventive value. They also make the description more believable and more useful.

Add edge-case handling where it matters

Real systems do not only work in perfect conditions.

That is why technical detail often becomes stronger when you explain how the system behaves when something is missing, weak, delayed, conflicting, or uncertain.

AI-generated text often skips this because it aims for a clean narrative.

But edge cases are where many inventions show depth.

What happens if the user input is incomplete?

What happens if sensor data is noisy?

What happens if a network call fails?

What happens if the system has multiple equally ranked options?

What happens if the model confidence is below a target threshold?

What happens if stored state information is stale?

When these issues matter to the invention, include them.

You do not need to turn every draft into a fault manual. But strategic edge-case detail can make a description feel much more real and much more technically grounded.

Use examples to make abstract logic concrete

Sometimes AI-written descriptions are too abstract because they never touch a concrete example.

The fix is not to narrow the invention to one use case. The fix is to use a representative example that teaches the mechanism clearly.

For instance, instead of only saying that the system ranks options based on user-specific and environment-specific inputs, use a concrete example.

Explain that the system may receive a target delivery location, a current driver route, live traffic conditions, package priority data, and building access history, then generate a delivery risk score and adjust the stop sequence based on the score.

Now the abstract idea has weight.

Examples help the reader see the system in motion.

Just make sure the example is presented as an example, not the only form of the invention.

Tell the story of change

A lot of shallow descriptions fail because they do not explain what changed.

They describe the invention as though it simply exists.

But real technical detail often becomes clearer when you explain the before and after.

What did earlier systems do?

Where did they break?

What was changed in the new approach?

What new path, structure, or control logic was introduced?

What result follows from that change?

This is not just useful in the background section. It is useful throughout the description.

The invention becomes easier to understand when the reader can see the delta.

That is especially important in patent drafting, where the technical contribution needs to feel grounded in a real change to how something is done.

The best technical detail often comes from “why” questions

When editing AI-written text, do not only ask what happens.

Ask why it happens that way.

Why is the score calculated before the threshold check?

Why does the system use stored profile data at that stage?

Why does the controller adjust the output only after the second signal is received?

Why does the inference result go through validation before being applied?

Why is one path distributed and another centralized?

These why questions often reveal the design logic that makes the invention more than a sequence of steps.

That design logic is valuable. It can help distinguish your approach from a generic system description.

Look for hidden novelty in the connections, not just the parts

Many inventions are not new because they have brand-new pieces.

They are new because they combine known pieces in a new way.

AI-written descriptions often miss this because they focus too much on naming components and not enough on how those components interact.

If the inventive value lives in the connection between modules, the timing of data exchange, the use of one output as the trigger for another process, or the feedback path between components, the description must make that visible.

For example, the invention may not be a new sensor and not a new model, but rather the way the sensor output is preprocessed, filtered by context rules, scored by a model, and then fed into a real-time control path.

That connection is the invention.

So when you revise AI-written descriptions, ask not only what the parts are, but how the parts create something new together.

Add optional detail without turning it into a requirement

There is a balance to strike here.

You want more technical detail, but you do not want to trap the invention inside one narrow implementation unless that is truly the point.

That means some added detail should be framed as one embodiment, one example, one version, or one possible configuration.

This lets you enrich the description without shrinking the overall scope.

For instance, you can explain that in some implementations the score may be based on a weighted combination of latency, cost, confidence, and resource availability, while also making clear that other scoring approaches may be used.

That kind of detail helps support the invention while preserving flexibility.

This is one reason founder teams benefit from systems that mix AI speed with legal review. It is not enough to add more words. You want to add the right kind of detail in the right way. PowerPatent helps teams do exactly that with modern drafting support plus real attorney oversight. Learn more here: https://powerpatent.com/how-it-works

Use repetition carefully: repeat concepts, not empty phrasing

AI has a habit of repeating language.

That can make a draft feel padded without adding technical value.

But some repetition is helpful when it repeats the core technical concept from different angles.

For example, the same invention may be described once as a system, once as a method, once through a flow example, and once through an alternate embodiment.

That is useful repetition because it strengthens support and understanding.

What you want to avoid is repeated filler.

The system improves performance.

The system enhances efficiency.

The system optimizes operation.

The system increases reliability.

If these statements are not tied to mechanism, they do not add much.

When revising AI-written text, keep the repetition that deepens the concept and remove the repetition that only restates the benefit.

Force the draft to answer practical questions

This is one of the best editing techniques of all.

After reading a paragraph, ask practical questions a real engineer or patent reviewer would ask.

Where does this data come from?

Where is it stored?

What triggers this calculation?

What happens before this step?

What happens if the value is below threshold?

What are the main system components involved here?

Can this happen on one device or multiple devices?

What output is generated and who receives it?

What does the system do next?

If the paragraph cannot answer these questions, it is probably still too soft.

This method works because technical detail is practical by nature. It describes how something actually works in the real world.

Use AI to critique AI

One of the smartest moves you can make is to use AI not only to draft but to review the draft.

Once you have an AI-written description, ask the model to identify vague language, missing mechanism, unsupported generalizations, inconsistent terms, and places where more technical explanation is needed.

Ask it what a technically trained reader would still want to know.

Ask it what parts of the process are implied but not explained.

Ask it to compare the write-up against your source notes and identify what got lost.

This review stage can be incredibly useful.

It turns AI from a first-pass writer into a pressure-testing tool.

That is often where a lot of value appears.

Translate code into logic, not just labels

If the invention lives in software, one of the best ways to add real technical detail is to work backward from code or pseudocode.

Do not dump raw code into the description and call that technical detail. That is usually not the right move.

Instead, translate code into logic.

What variables are received?

What decision is made first?

What loop or iteration occurs?

What conditions branch the flow?

What data structure is queried?

What output is generated?

What update occurs afterward?

This process helps anchor the description in the real system while keeping it readable and flexible.

A lot of vague AI-written software descriptions become much stronger once they are tied back to actual implemented logic.

Technical detail often lives in sequence

Order matters more than many people think.

An AI-written description may mention the right steps but still feel weak because it does not show the sequence clearly.

Does the system score the candidates before applying policy rules, or after?

Does it normalize the sensor output before comparison, or after?

Does it update the stored profile before issuing the output, or only after feedback is received?

These sequencing details can matter a great deal.

Sometimes the inventive value is partly in the order.

So when you revise a description, look carefully at sequence. If sequence matters, make it clear.

Make tradeoffs visible when they matter

Good technical detail sometimes includes the reason one path is chosen over another.

Maybe the system uses local processing to reduce latency.

Maybe it delays action until a second verification signal arrives to reduce false positives.

Maybe it uses a lightweight model first and a deeper model only when uncertainty remains high.

Maybe it stores summary state locally but full history remotely.

These choices often reveal the engineering thought behind the invention.

That kind of detail makes the description feel much more real and much more tied to actual design work.

Strong descriptions often connect to system goals through mechanism

You do not need to remove benefits from the description. You just need to link them to mechanism.

For example, instead of saying the system improves accuracy, explain that the system compares a first output against a secondary validation stage before presenting a final result, thereby reducing low-confidence or conflicting outputs.

Instead of saying the invention reduces compute load, explain that the system filters candidate inputs using a lightweight scoring stage before applying a more resource-intensive model to a smaller subset.

Now the benefit is grounded in the process.

That is much stronger.

Do not let every paragraph float at the same altitude

A common AI drafting problem is flatness.

Every paragraph sits at the same level of abstraction. Nothing zooms in. Nothing zooms out.

A stronger technical description varies altitude.

One paragraph may introduce the broader system.

The next may zoom into a key module.

The next may walk through a representative flow.

The next may describe an alternate implementation.

The next may explain how the system handles uncertainty.

That variation gives the document depth.

It also keeps the writing more engaging and easier to follow.

Build around what competitors would actually copy

This is a strategic point, not just a drafting point.

When adding technical detail, make sure you are deepening the parts that matter to the business.

What hidden process gives your system an edge?

What logic would a competitor borrow if they wanted your results?

What architecture choice creates speed, trust, cost savings, safety, or scalability?

These are the parts worth describing carefully.

Do not spend all your detail budget on side features while leaving the real leverage layer too thin.

This is where patent thinking and business thinking should meet.

One of the biggest mistakes in technical writing is spending too much time describing what is visible and not enough time describing what is defensible.

That is a problem because competitors usually do not win by copying the surface.

They win by copying the engine under the surface.

They copy the hidden workflow that makes the product feel fast. They copy the ranking logic that makes the output feel smart. They copy the system path that reduces cost. They copy the trust layer that makes adoption easier. They copy the control logic that makes the system more stable. They copy the internal structure that gives the business its real edge.

That is the layer your technical description should capture.

If your write-up explains the outer shell but leaves the deeper advantage vague, you may end up documenting the product without really protecting the value.

The Most Valuable Thing Is Often Not the Most Obvious Thing

This is where many startups get tripped up.

The feature customers talk about most is not always the feature competitors most want to copy.

Customers may love the dashboard, the speed, the clean user flow, or the recommendation quality. But the thing that makes those outcomes possible may sit somewhere deeper in the stack.

It might be the way inputs are filtered before scoring.

It might be the way the system decides when to escalate to human review.

It might be the way confidence is handled across multiple data sources.

It might be the way computation is split across local and remote systems.

It might be the way one process reuses prior state to cut latency.

Those deeper mechanics are often the real advantage.

That means your description should not only follow what looks impressive from the outside. It should follow what creates the advantage on the inside.

Ask the Copycat Question Early

A very practical move is to ask one direct question before expanding the draft:

If a fast-moving competitor wanted similar results in the shortest possible time, what would they copy first?

This is a better question than asking what the product does.

It forces the team to think about the real leverage point.

Sometimes the answer is obvious. Sometimes it is not. But either way, the discussion is valuable.

It shifts the writing process away from general explanation and toward strategic explanation.

Once the team has an answer, compare it to the current draft. Is that copy point described clearly? Is it explained in a way that shows how it works? Is it supported with enough detail that it does not disappear into vague language?

If not, the draft may still be missing the most important part.

Separate “Nice to Have” Detail From “Must Protect” Detail

Not all technical detail has the same value.

Some details are helpful for understanding the system, but they are not central to what makes the business hard to copy.

Other details are absolutely core.

Strong drafting makes that distinction.

If you spend too much time expanding secondary implementation choices while leaving the real moat too thin, the document may become longer without becoming stronger.

A smart internal habit is to divide the invention into three layers.

The first layer is the core advantage. This is the part that gives the company real leverage and would hurt most to lose.

The second layer is supporting structure. These are the pieces that help the core advantage work in practice.

The third layer is incidental implementation. These are details that may matter for one embodiment but do not carry the same strategic weight.

Once you see the invention this way, you can choose where to add the richest technical detail.

The first layer deserves the most care.

Look for the Hidden Conversion Point

In many products, the real technical edge lives at a conversion point.

This is the point where raw input becomes useful output.

It is where signals become rankings.

It is where model output becomes action.

It is where user data becomes a tailored workflow.

It is where uncertainty becomes a decision.

It is where noisy inputs become a stable control path.

Competitors often focus on these conversion points because they are the shortest path to recreating value.

That makes them extremely important in technical descriptions.

If your invention has one of these moments, describe it well.

Explain what enters that stage.

Explain what logic is applied.

Explain what checks or filters are used.

Explain what determines the final output.

Explain what happens when the result is weak, uncertain, or conflicting.

That is often where your description becomes much more useful.

Protect the Shortcut, Not Just the Full System

Competitors do not always copy an entire product.

Sometimes they copy the shortcut.

They identify the one internal method that gives most of the value and rebuild just that part inside their own system.

This is why businesses should review technical descriptions with a very sharp question in mind:

What is the smallest part of our system that another company could copy and still get a major benefit?

That part deserves serious attention.

Maybe your full product has ten moving pieces, but the real shortcut is the prioritization logic.

Maybe it is the pre-processing step that improves later model accuracy.

Maybe it is the system that suppresses bad outputs before they reach the user.

Maybe it is the control loop that keeps performance stable under changing conditions.

That shortcut is often where the business risk sits.

Describe that clearly.

Do not assume the full architecture alone will do the job.

Use Internal Business Knowledge to Guide Technical Depth

This is where founders, product leads, and engineering leads can add huge value.

They often know things that are not obvious from the draft alone.

They know which feature drives renewals.

They know which system behavior wins enterprise trust.

They know which process solved a pain point competitors still struggle with.

They know which internal breakthrough made the product commercially viable.

That knowledge should shape where the technical depth goes.

A document that is technically accurate but blind to business importance may miss the best chance to protect what matters.

So before expanding a section, ask the people closest to the business which hidden system behavior matters most commercially.

Then make sure the description gives that behavior the attention it deserves.

Map the Competitor Workaround Path

Here is a highly actionable exercise that many businesses should use.

Imagine a competitor reads your public product materials and understands the broad problem you solve. They want to imitate the result but avoid copying your product exactly.

What workaround path would they take?

Would they swap out the interface but keep the same decision flow?

Would they use a different model but preserve the same scoring pipeline?

Would they change the hardware form but keep the same sensor-processing logic?

Would they rename the components but follow the same sequence of operations?

This exercise helps reveal which technical details are truly central and which are easier to replace.

The more likely a workaround path still depends on your underlying method, the more important that method becomes in the write-up.

That is the part you should build around.

Focus on What Creates Repeatable Advantage

Some technical features are impressive once.

Others create repeatable advantage.

That is the difference between a novelty and a moat.

If a competitor copied one part of your system, what would keep giving them value day after day?

Would it be the data handling logic that improves performance over time?

Would it be the automation path that reduces manual cost?

Would it be the verification layer that makes the system trusted in high-risk settings?

Would it be the architecture that allows the system to scale without breaking?

Those repeatable advantage points are especially important to describe well.

They often matter more than flashy one-time behaviors because they tie directly to long-term business outcomes.

Do Not Over-Invest in Easily Replaceable Detail

A common drafting mistake is spending too much effort describing details that a competitor could swap out without losing the main benefit.

This can create a false sense of strength.

For example, if the invention’s value is really in the decision framework, it may not help much to over-focus on one specific interface layout. If the strength lies in the way signals are combined, too much detail about one vendor-specific model or one stack choice may not be the best use of the description.

That does not mean these details should never appear.

It means they should not crowd out the parts that truly matter.

A strong business-minded review asks whether the detail being added would still matter if a competitor changed the packaging and kept the core logic.

If the answer is no, it may deserve less emphasis.

Build Detail Around the Moment of Differentiation

Every strong product has a moment where it becomes different in a way customers feel.

Maybe the result becomes faster.

Maybe it becomes more accurate.

Maybe it becomes safer.

Maybe it becomes easier to trust.

Maybe it becomes more adaptive.

The technical description should trace that user-facing difference back to the underlying mechanism.

In other words, find the moment of differentiation and then explain the system choices that create it.

Do not stop at saying the product is better.

Show where the system becomes better.

Show what step, interaction, or logic path produces that difference.

This is one of the strongest ways to make a description more strategic.

Use a “Why Would They Steal This?” Review Pass

Here is a very simple and effective review method.

After drafting a section, read it and ask:

Why would a competitor want to steal what is described here?

If the answer is weak or unclear, the section may be too focused on generic detail.

If the answer is strong, ask a second question:

Does the section explain enough of the mechanism that the business value of this part is visible?

This review pass helps connect technical writing to competitive reality.

It is especially helpful for founders who want to make sure the document is not just accurate, but strategically useful.

Bring Sales, Product, and Engineering Views Together

This is a strong business move that many teams overlook.

Engineering often knows how the system works.

Product often knows which workflow matters most to adoption.

Sales or customer-facing leaders often know what buyers react to and where competitors seem weakest.

When those views come together, the team gets a much clearer picture of what is truly worth protecting.

That does not mean every review needs a big meeting.

Even a short conversation can help answer a powerful question:

What technical behavior creates the result the market actually cares about?

Once that answer is clear, the write-up can go deeper in the right place.

Describe the Part That Saves Time, Money, or Risk

Competitors usually chase the parts that change business outcomes.

They copy what cuts cost.

They copy what reduces latency.

They copy what lowers manual work.

They copy what improves trust or safety.

They copy what makes scaling easier.

These are often the most commercially important technical layers in a system.

So when deciding where to add more technical detail, ask which part of the invention changes one of these outcomes in a durable way.

That is often the part worth expanding most carefully.

A section that clearly explains how your system lowers one of these business pressures can be much more valuable than a section full of general architecture detail alone.

Build Descriptions That Still Matter After the UI Changes

User interfaces change all the time.

Competitors know this.

They can change colors, flows, names, layouts, and user journeys while still copying the deeper value engine underneath.

That is why businesses should be careful not to over-anchor technical descriptions to the visible product surface.

The better move is to ask what remains true even if the user experience changes.

What decision logic still matters?

What data flow still matters?

What control mechanism still matters?

What validation or ranking path still matters?

Those are the parts to build around if you want the write-up to stay useful over time.

One Good Internal Prompt Can Improve This Fast

A very practical internal prompt for teams is this:

“Assume a competitor wants our core outcome but does not care about copying our interface or brand. Which technical method, system logic, or hidden workflow would they most likely reuse, and have we described that part clearly enough that its real value is visible?”

That one prompt can open up a much stronger discussion.

It gives the team a strategic lens.

It helps founders identify whether the write-up is centered on the right layer.

And it often reveals where more technical detail should go next.

The Strongest Technical Detail Protects Business Leverage

This is the bigger idea underneath the whole section.

Technical writing should not become a random dump of engineering facts.

It should become a sharper explanation of the parts of the invention that create real leverage for the business.

When you build around what competitors would actually copy, the writing gets more useful.

It becomes more strategic.

It becomes more aligned with market reality.

And it becomes more likely to support protection that matters when the stakes get higher.

That is the standard worth aiming for.

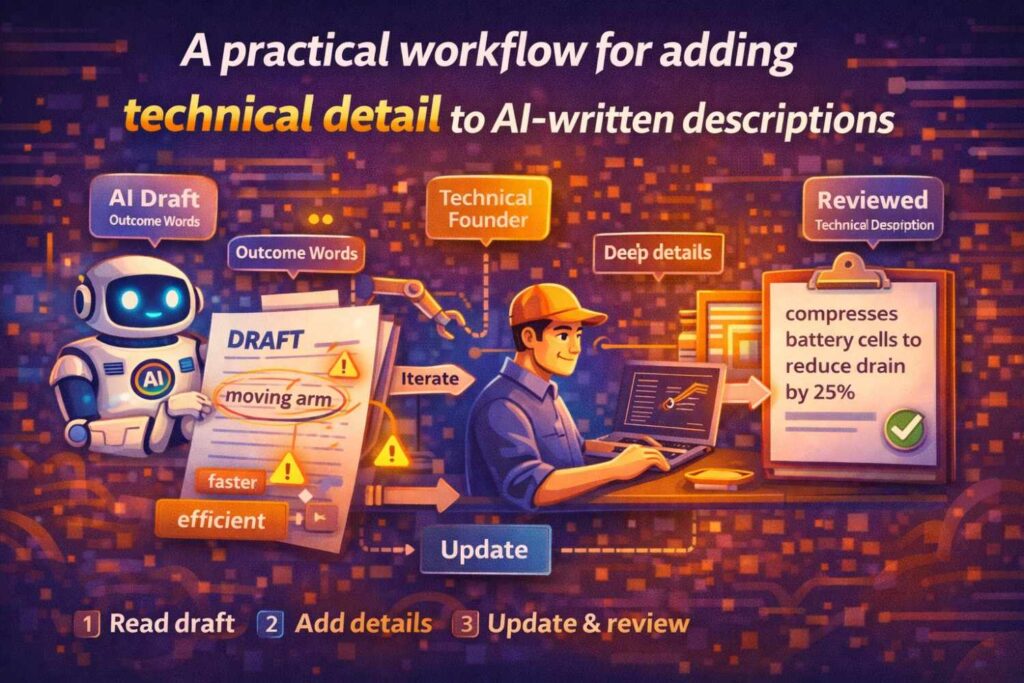

A practical workflow for adding technical detail to AI-written descriptions

Let us make this concrete.

Suppose you start with an AI-written description that is one page long. It says your platform uses machine learning to detect security threats and trigger responses in real time.

That is a start. But it is still broad.

First, pull in your raw material. Gather the design doc, the event flow notes, the policy logic, the model explanation, and any system diagrams.

Next, identify where the description uses outcome words without explanation. Mark phrases like detects threats, evaluates risk, triggers responses, and improves security posture.

Then expand each of those phrases.

What signals are ingested?

How are they normalized?

What features are extracted?

What score is generated?

What threshold is applied?

What response actions are available?

How is action chosen?

What happens when the score is uncertain?

Then name the main system components. You may have an event intake layer, a feature generation module, a threat scoring engine, a policy evaluator, and a response orchestrator.

Then explain the flow. Events arrive. Features are generated. A threat score is computed. Policy logic maps the score and event context to a response level. If the score exceeds a threshold and the response level permits automatic action, the system triggers the selected action. Otherwise the event may be escalated or logged for review.

Then add one concrete embodiment. Then add one or two alternatives. Then add optional conditions or fallback behavior.

Now the original AI text has turned into something with real technical depth.

That is the process.

Review the finished draft like a skeptic

Once you add detail, do not assume the work is done.

Read the draft like a skeptic.

Ask whether every major claim is supported by explanation.

Ask whether any paragraph still sounds impressive without teaching anything.

Ask whether the invention is described through one narrow implementation only.

Ask whether optional features accidentally sound required.

Ask whether you can now draw the system and explain the flow.

Ask whether the core inventive point is actually visible.

This final review is where weak spots often surface.

Better technical detail leads to better patent work

This article focuses on improving AI-written descriptions, but the impact goes further.

When technical detail improves, patent drafting improves.

Inventor review gets easier.

Attorney review gets more productive.

Claim strategy gets better support.

Continuation options often improve.

Business alignment gets clearer.

This is why founders should care so much about the quality of the description stage. A weak description often creates downstream weakness everywhere else.

A strong one creates leverage.

Why the old approach breaks for modern startups

Traditional drafting processes often fail modern technical teams because the source detail is trapped in engineering work, while the legal draft gets built through thin summaries and delayed interviews.

That gap creates generic descriptions.

AI can help bridge part of that gap, but only if the team knows how to add real technical detail instead of stopping at the first polished output.

That is why startup teams need a better workflow, not just another writing tool.

PowerPatent helps founders and engineers capture what they actually built, shape it into stronger technical descriptions, and move toward filing with the benefit of real patent attorney oversight. That means less guesswork, fewer thin drafts, and a much stronger connection between the invention and the application. See how it works here: https://powerpatent.com/how-it-works

The goal is not more words. It is more truth

This may be the most important idea in the whole article.

Adding technical detail does not mean inflating the draft.

It does not mean throwing in jargon.

It does not mean making every sentence longer.

It means adding more truth.

More real sequence.

More real structure.

More real conditions.

More real explanation.

More real design logic.

That is what transforms AI-written descriptions from generic shells into useful technical assets.

What founders should do now

If you already have AI-written invention descriptions, do not throw them away.

Use them as raw material.

Pull up one of them and read it closely.

Mark every place where the text says what the system does without showing how.

Mark every vague verb.

Mark every paragraph you cannot sketch.

Mark every place where “AI” is doing the work of actual explanation.

Then go back to your source notes, your engineers, your system diagrams, and your code logic.

Pull in the missing mechanism.

Name the parts.

Show the flow.

Add the conditions.

Add one real example.

Add one alternate path.

Then read it again.

That is how you turn a smooth draft into a strong one.

Knowing that AI-written technical descriptions need more depth is useful.

Acting on that knowledge inside a real company is what creates value.

That is the point where most teams stall.

They agree the draft is too broad. They agree the wording needs more substance. They agree the current write-up does not fully reflect the invention. Then nothing happens. The document sits in a folder. The engineers move on. The founder gets pulled into hiring, sales, fundraising, and shipping. The weak description stays weak.

That is a preventable problem.

The right move is not to wait until everything is perfect. The right move is to create a simple, repeatable way to improve technical descriptions while the invention still feels alive inside the team.

That process does not need to be heavy. It does need to be intentional.

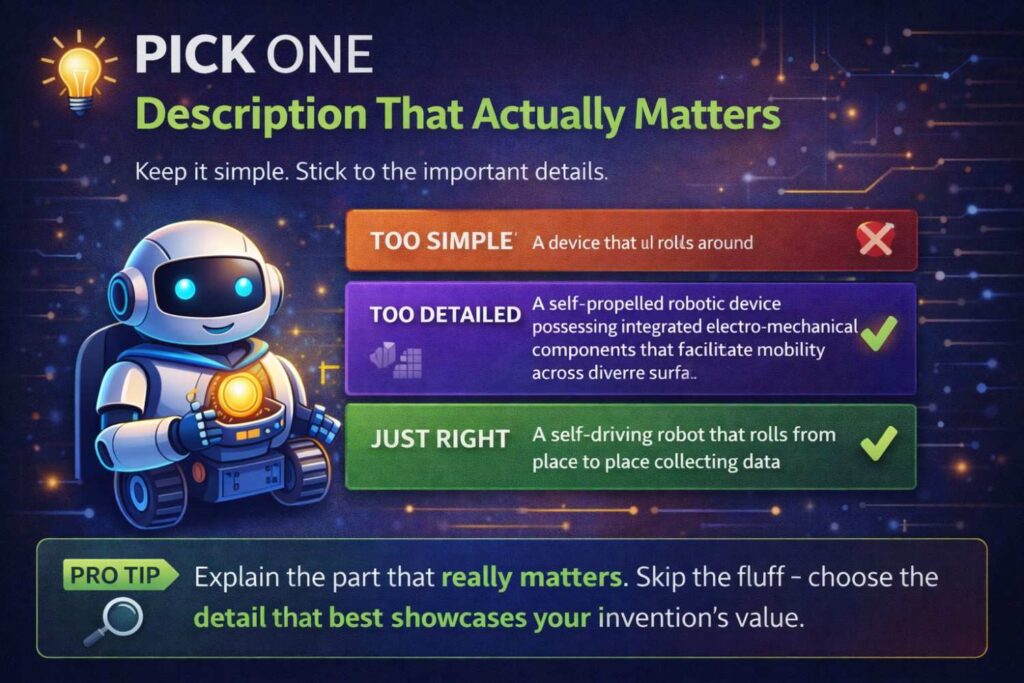

Pick One Description That Actually Matters

Do not start by trying to fix every draft your company has ever produced.

That usually creates drag and leads nowhere.

Start with one description tied to something that matters now. Pick the invention that connects most directly to product advantage, future revenue, investor story, or competitive risk.

That focus matters.

A lot of companies spread their effort too thin. They polish secondary ideas while the core technical advantage stays described in vague terms. That is backward.

A better question is this: if your company could only improve one invention write-up this quarter, which one would have the highest business value?

That is where the effort should go first.

This helps in two ways. It makes the work manageable, and it aligns the drafting effort with what the business actually needs protected.

Turn “We Should Improve This” Into an Owner and a Deadline

This is one of the simplest and most useful things a founder can do.

Most drafting problems do not persist because they are hard. They persist because no one clearly owns the next step.

If you want better technical descriptions, assign an internal owner. That person does not need to be the founder, and does not need to be the primary inventor. They need to be responsible for moving the description from rough AI output to real technical substance.

That means collecting source material, scheduling a short inventor session, running review passes, and making sure the draft gets stronger instead of sitting untouched.

Give that work a real deadline.

Not an open-ended goal. A real date.

Without an owner and a date, even good intentions usually disappear inside the normal speed of startup life.

Build a “Technical Truth Pass” Into Your Process

One very useful internal habit is to create a review pass that is not about grammar, style, or legal language.

It is only about technical truth.

During this pass, the team should ask whether the description reflects what really happens in the system. Not what the company says in demos. Not what sounds polished in a deck. What actually happens.

This is where founders can create a strong discipline inside the business.

Take the AI-written draft and ask the inventors or technical leads to mark every sentence that feels directionally right but technically thin. Then ask them to replace each weak section with what the system truly does.

This is often the fastest way to turn vague writing into something much more defensible.

It also helps keep the company honest about where the invention really lives.

Create a Short “Missing Detail” Interview Template

A founder does not need to run a long legal meeting to improve a technical description.

A short, focused interview often works better.

Create a standard internal set of prompts that the team can answer whenever an AI-generated draft needs real depth. Keep it tied to the invention, not to legal theory.

Ask what the system receives first.

Ask what happens next.

Ask what decision point matters most.

Ask what data or signal changes the path.

Ask what part was hardest to get right.

Ask what happens when normal conditions fail.

Ask which implementation details are examples and which are core.

Ask what a close competitor would try to copy if they understood the system.

These questions should live in your company process, not only in someone’s head.

Once that becomes standard, improving descriptions gets much faster and much more repeatable.

Link Technical Description Work to Business Milestones

This is where founders can become far more strategic.

Do not treat stronger invention descriptions as random cleanup work. Tie them to business moments.

If a fundraising process is coming, sharpen the description of the technical edge investors will care about.

If an enterprise deal is in motion, improve the description of the system layer that makes the product hard to replace.

If a product launch is coming, strengthen the description of the new technical advantage before it becomes public.

If patent filing is approaching, improve the core invention write-up while inventors still remember why the design works.

When description work is tied to a visible business milestone, it is far more likely to happen well and on time.

This also helps teams understand why the work matters.

Build a Habit of Capturing the “Why It Finally Worked” Layer

A lot of weak invention descriptions explain what the team built, but not what made it finally work after earlier attempts failed.

That missing layer often contains the best technical value.

Founders should make it a habit to ask teams this question after major technical wins: what was the design change, decision rule, data path, or system arrangement that finally made this workable?

That answer is often more valuable than the first summary of the feature.

It reveals the real turning point.

It also helps separate ordinary implementation from actual invention.

When that “why it finally worked” layer becomes part of the description process, the output gets much stronger and much more useful for the business later.

Do Not Let Product Marketing Language Leak Into Core Technical Records

This is a very practical warning.

As startups grow, product language gets stronger. Messaging gets cleaner. Teams learn how to describe benefits in a simple and compelling way.

That is useful for growth.

It can be harmful when it takes over invention records.

If the internal source material becomes full of benefit claims and polished brand language, the company may slowly lose the direct technical explanation needed for strong patent writing.

Founders should protect against that.

One good internal practice is to keep a separate technical record for major inventions. Not a marketing page. Not a launch summary. A plain internal write-up that explains the mechanism, flow, architecture, and logic in direct terms.

That record becomes incredibly valuable later when the company needs to prepare patents, diligence material, or technical defensibility stories.

Decide What Level of Detail the Business Actually Needs

Not every technical description needs the same depth.

That is an important leadership call.

Some descriptions only need enough detail to preserve invention memory and prepare for later expansion.

Others need enough depth to support near-term filing.

Others should be strong enough to stand up in investor or diligence review.

Founders should decide which level each important invention requires.

This helps allocate time better.

A common mistake is overworking low-value descriptions and underworking the ones tied to the company’s real moat.

A better approach is to sort invention descriptions by strategic importance and then apply the right level of detail accordingly.

This makes the process more efficient and much more aligned with business reality.

Use Internal Reviews to Surface Future Variants Early

One of the most useful things founders can do is push teams to think one step past the current implementation.

Not in a vague way. In a grounded way.

When reviewing an AI-written description, ask what likely version of the system will exist six to twelve months from now that still uses the same core idea.

That question often surfaces important future variants.

Maybe the model will move on-device.

Maybe the ranking logic will become adaptive.

Maybe the workflow will expand to a second customer type.

Maybe the current manual check will become automatic.

Maybe the system will shift from centralized to distributed execution.

If those paths are real and already visible, they should shape how the technical description is improved now.

This is a smart business move because it helps the writing stay useful longer.

Make Description Improvement a Cross-Functional Exercise

Founders should not assume the best technical description will come only from engineering.

Engineering is critical, of course. But product leaders often understand where the system is heading. Operations teams may know where the system breaks under real-world pressure. Customer-facing teams may know what part of the experience depends on hidden technical logic that competitors would struggle to match.

That means improving technical descriptions can benefit from more than one lens.

The founder’s role is to pull the right people in at the right moment.

Not for a giant committee review. Just enough to make sure the description reflects technical truth, product direction, and business importance.

This makes the final output far more valuable.

Create a “Would This Still Matter in a Year?” Check

Here is a simple review habit that is very useful for founders.

After a draft has been improved, ask one direct question: if the company keeps growing and the product evolves over the next year, will this description still capture something important?

If the answer is no, the draft may still be too tied to temporary implementation details.

That does not mean the current version is irrelevant. It means the description may not yet reflect the deeper technical idea that gives it lasting value.

This check is especially important for companies building quickly. Product surfaces change. Internal logic often evolves. The best technical descriptions preserve what matters through that change.

Keep the Raw Notes, Not Just the Clean Draft

This may seem small, but it matters a lot.

When teams improve AI-generated descriptions, they often keep only the cleaned-up version. The rough notes, follow-up answers, inventor comments, and rejected alternatives disappear.

That is a mistake.

Those raw materials often contain valuable technical detail that may matter later, even if it does not all go into the first polished draft.

A variation that felt secondary today may become the center of a later filing.

A design explanation that seemed too detailed for one document may become important in attorney review.

A question raised during the editing process may reveal a second invention path.

Founders should make sure those materials are saved in an organized way.

That creates a stronger long-term IP base for the company.

Use Better Descriptions to Improve More Than Patents

This is an important strategic point.

A stronger technical description is not only useful for patent filing.

It can help in fundraising by clarifying the real technical edge.

It can help in diligence by showing that the company understands its own defensibility.

It can help in onboarding by teaching new engineers how the system really works.

It can help in roadmap planning by making invention boundaries easier to see.

It can help in internal alignment by giving teams a cleaner shared view of what is actually differentiated.

That means founders should not see this work as legal overhead.

Done well, it strengthens the business in multiple ways at once.

Set a Standard for What “Good Enough” Looks Like

Many companies fail to improve technical descriptions because they never define success.

The team keeps saying the draft needs more detail, but no one knows when it has enough.

A founder can fix that by creating a simple internal standard.

A strong description should make the main system or method understandable to a technical reader. It should explain what changed. It should show the key mechanism, not just the result. It should describe the important decision points, not just the beginning and end. It should reflect the business-critical layer of the invention. And it should leave room for realistic future growth where appropriate.

That standard does not need to be long. It does need to be clear.

Once the team knows what “good enough” means, the work gets easier to manage.

Turn This Into a Repeatable Company Habit

The biggest business win is not improving one document.

It is creating a system that improves every important document going forward.

That means founders should think beyond the current draft and ask what repeatable habit the company needs.

Maybe it is a monthly invention review.

Maybe it is a short technical write-up after major architecture changes.

Maybe it is an internal owner who runs the first AI-assisted pass on invention notes.

Maybe it is a standard review step before outside counsel sees a draft.

Whatever the method, the goal is to stop relying on memory and improvisation.

Strong companies build repeatable invention capture and description habits.

That is what turns technical work into real IP leverage.

The Fastest Next Step for Most Founders

For most founders, the best next move is simple.

Pick one important AI-written description this week.

Schedule one short session with the people who know it best.

Use that session to surface the missing mechanism, the critical decision points, the likely future variants, and the technical layer a competitor would actually want to copy.

Then revise the document with those points in mind.

That one step can change the quality of the draft dramatically.

And once the company sees the improvement, it becomes much easier to make the process repeatable.

PowerPatent Makes This Easier to Do Well

Founders do not need more complexity here. They need a better workflow.

PowerPatent helps startups move from rough invention notes and AI-assisted drafts to stronger patent-ready descriptions by combining smart software with real attorney oversight. That means teams can capture better technical detail, improve the substance behind the writing, and move faster without sacrificing quality. You can see how it works here: https://powerpatent.com/how-it-works

Better Action Now Creates Better Protection Later

This is the real takeaway.

If your company is already using AI to help with technical descriptions, you are not starting from zero.

That is good.

But the real advantage comes from what you do next.

If you add technical truth, strategic depth, and repeatable process now, you do not just improve one write-up. You strengthen the company’s future patent filings, internal clarity, and ability to protect what actually matters.

That is why founders should act now.

Not because the writing needs to look better.

Because the business needs the truth captured while there is still time to use it.

Final thought

AI can help you start.

It cannot replace the need for technical truth.

The strongest descriptions are not the ones that sound smartest at first glance. They are the ones that explain real systems, real flows, real choices, and real invention logic in a way that holds up under scrutiny.

That is what patent-quality writing needs.

That is what real technical detail looks like.

And that is how you make AI-written descriptions worth something.